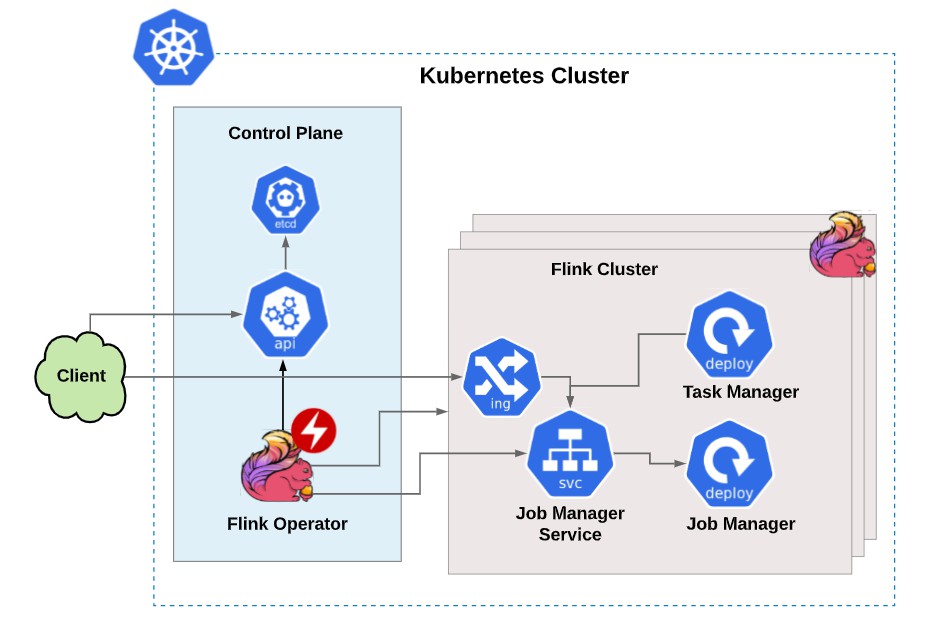

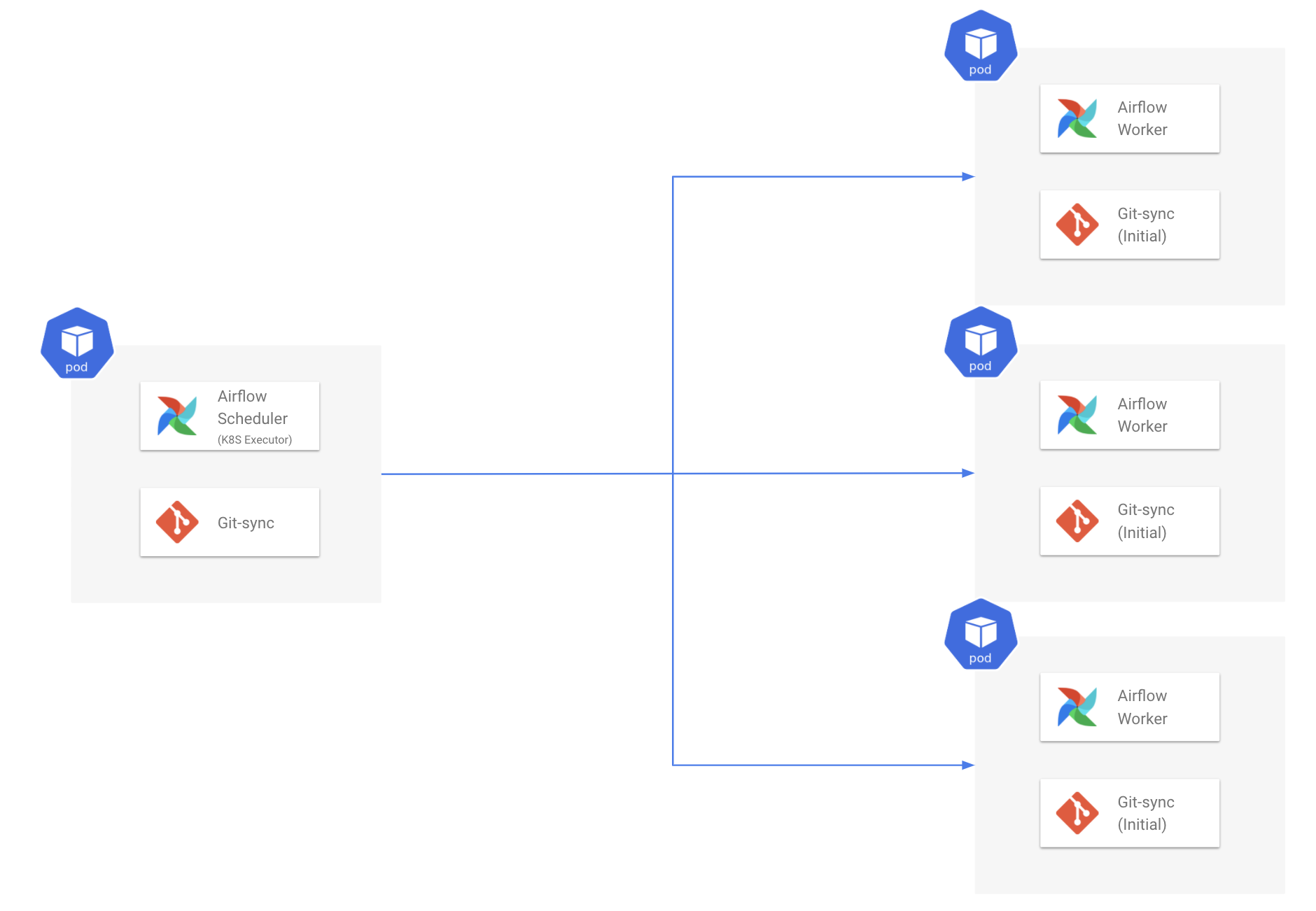

After the job launch, operator will only monitor the track logs health. Necessary image(s) can be loaded according to the defined parameters with the use of only single command.After this the desired pod will be launched according to the defined specifications (2).Kubernetes Operator makes use of Python Client (for Kubernetes) and create a request which will then be processed by APIServer (1).After using kubernetes vault only the required person(s) will have access to the sensitive data (unlike previously where usually all workers used to have access).With the use of Kubernetes Operator, responsible team can make use of Kubernetes Vault technology to store any sensitive data.The need of isolating passwords, API keys and other important credentials arises for the security purposes. Security of sensitive data (like credentials, client details etc) is the main concern of the development team.The use of custom (docker) images allow Airflow users to be sure that everything is working as expected (environment for tasks, dependencies and configurations).For example let’s take a hypothetical scenario in which Airflow developer is using the SciPy (a python library) for task A, and task B requires NumPy (another python library) then the developer would need to either take of both the dependencies within all Airflow workers (not an easy task) otherwise offload the task to some external machine (may create issues if something unexpected happens to that machine) The operators that are running within static Airflow workers, dependencies become cumbersome to manage.More flexibility for dependencies and configurations: But as of now (after using Airflow with Kubernetes), they can run any task within a docker container using the exact same operator while not worrying about the extra Airflow code to maintain.However, whenever a developer develop a new operator he/she had to build the whole new plugin. Plugin API for Airflow offers great support when engineers want to test the new features that are implemented into their DAGs.

Using Airflow with Kubernetes will help with the following: More flexibility when deploying applications: So in order to achieve this developers or Dev-Ops engineers are always looking for ways to decouple pipeline steps and increase monitoring to reduce the risk of future outages and other problems like fire-fights (emergency allocation of resources due to unexpected scenarios like extra stress or load) that might arise. Developers who are using Airflow consistently try to make deployments and ETL (Extract, Transform and Load) pipelines easier to manage.Apart from that it also allows developers to develop their own connectors for various requirements. So the Airflow already has operators for different frameworks like BigQuery, Apache Spark, Hive etc.After setting up the pods users that are using airflow will now have full control over resources, run-environments and secrets making airflow more powerful and allowing users to do any job they want to do using airflow workflows.Kubernetes solve this problem by allowing users to create and launch different Kubernetes configurations and pods.So the difference in purposes leads to dependency management problems because both the teams would be obviously using different libraries as the use case is different. But a company usually have multiple workflows for different purposes like data science pipelines, production applications etc.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed